How to scale experiments with AI conversion rate optimization

AI conversion rate optimization uses machine learning and predictive analytics to run faster experiments, generate smarter hypotheses, and personalize experiences at scale. AI removes the bottlenecks that slow the CRO process down; it doesn’t replace it.

Published April 6, 2026

Running a CRO program at scale has always been a resource problem. You need enough traffic to reach statistical significance, enough time to run sequential tests, and enough analytical capacity to turn raw data into testable ideas. For most teams, those constraints mean a slow, bottlenecked testing roadmap and a backlog that never fully clears.

AI changes the equation. By automating the most time-intensive parts of the optimization cycle, AI CRO allows teams to do more with the traffic and resources they already have. This guide covers what AI conversion rate optimization actually involves, where it delivers the most impact, and how to build a program that scales.

What is AI conversion rate optimization?

AI conversion rate optimization uses artificial intelligence technologies, like machine learning, natural language processing, and predictive analytics, to improve the rate at which website visitors complete a desired action. Those actions might include purchases, demo requests, form completions, or sign-ups, depending on your conversion goals.

Traditional CRO relies on manually defined hypotheses, sequential A/B tests, and periodic data reviews. AI CRO supplements or replaces those manual steps with systems that can:

- Process behavioral data in real time: AI analyzes large volumes of visitor signals simultaneously, far beyond what any analyst can review manually.

- Surface patterns and anomalies automatically: Machine learning identifies friction points and opportunities without waiting for a scheduled data review.

- Generate ranked test recommendations: AI prioritizes hypotheses based on existing performance data, reducing the time spent deciding what to test next.

- Adapt page experiences dynamically: Visitor characteristics trigger real-time changes to content, layout, and calls to action rather than serving every user the same experience.

The practical result is a faster, more data-dense program. Teams move from analysis to action in less time, run more test variations simultaneously, and deliver personalized experiences to segments that would be too granular to target through manual segmentation alone.

How AI differs from traditional CRO

The core difference between AI-assisted and traditional CRO is scale and speed. In a standard CRO program, a team follows a largely linear process: collect data, form a hypothesis, design a test, wait for statistical significance, implement the winning variant, and repeat. Each cycle can take weeks, and teams can only manage so many experiments at once before the program becomes unmanageable.

AI introduces several structural changes to that model.

- Data volume: AI can process thousands of behavioral signals per session, far beyond what any analyst can review manually, enabling more precise identification of friction points and opportunities.

- Hypothesis generation: Machine learning models surface test ideas from patterns in existing data, reducing the time analysts spend on qualitative research before a test can be built.

- Test efficiency: Predictive algorithms allocate traffic to variants more efficiently, often reaching confident conclusions faster than fixed-window testing approaches.

- Personalization depth: AI enables simultaneous, segment-specific experiences rather than applying a single winning variant to all users regardless of their behavior or intent.

None of this replaces strategic thinking. What it does is compress the time between data and action, which is the primary constraint for most CRO teams. Understanding where AI fits into the optimization cycle makes it easier to see where it delivers the most value.

Key applications of AI CRO

1. Behavioral analysis and user segmentation

AI tools can identify micro-segments in your traffic based on shared behavioral patterns, such as:

- Device type

- Session depth

- Traffic source

- Scroll behavior

- Entry point

Rather than manually defining audience buckets upfront, machine learning models group users by how they actually behave, which tends to produce more relevant and actionable test hypotheses.

For teams with high traffic volume but limited analyst capacity, this is particularly useful. Instead of spending weeks on exploratory data analysis, AI surfaces the segments most likely to convert and the friction points preventing them from doing so. Those insights feed directly into a higher-quality, better-prioritized testing backlog.

2. Predictive analytics

Predictive models analyze historical conversion data to forecast how a given user or segment is likely to behave. This enables two things: proactive optimization, where interventions are targeted at users showing low conversion probability before they drop off, and smarter traffic allocation in tests, where traffic is weighted toward better-performing variants earlier in the experiment cycle.

McKinsey's research on the economic potential of generative AI identifies marketing and sales as one of the largest value pools for AI, with AI-assisted decisioning shown to improve marketing productivity by a meaningful margin in high-data organizations. Applying those capabilities to CRO means fewer wasted test cycles and more experiments that resolve quickly with actionable outcomes.

3. AI-powered A/B and multivariate testing

Traditional A/B testing evaluates one variable at a time. Multivariate testing covers combinations of variables but requires large sample sizes to reach significance. AI-assisted testing improves both approaches, and doing so opens up the testing volume that most CRO programs struggle to sustain manually.

- Automated variant generation: Language models and generative design tools can create copy, headline, layout, and creative variants aligned to brand constraints, dramatically reducing build time for experiments.

- Dynamic traffic allocation: Multi-armed bandit algorithms shift traffic toward better-performing variants in real time, reducing the cost of running losing tests for their full duration.

- Early flagging: AI identifies underperforming variants earlier, cutting down the duration of inconclusive experiments.

- Parallel testing capacity: AI enables teams to test more variables simultaneously without requiring proportionally more traffic to maintain statistical confidence.

Adobe's 2025 Digital Trends report found that organizations using AI to orchestrate and personalize digital experiences reported measurable lifts in customer engagement, with many of the same capabilities underpinning AI-assisted testing and experiment orchestration.

4. Personalization at scale

One of the highest-impact applications of AI CRO is real-time personalization. Rather than serving every visitor the same page, AI dynamically adapts content, layouts, offers, and CTAs based on who the visitor is and the signals they’ve sent during their session. This is where the gap between AI-assisted and manual CRO becomes most visible.

McKinsey research shows that effective personalization typically drives a 5 to 15 percent revenue lift and a 10 to 30 percent improvement in marketing spend efficiency. According to Salesforce's State of the Connected Customer 2024 report, the share of global customers who feel companies treat them as individuals rather than numbers rose nearly three-quarters in 2024, up notably from previous years.

At the same time, customers are increasingly protective of their data and expect clear value in return, making targeted, trustworthy personalization critical rather than optional.

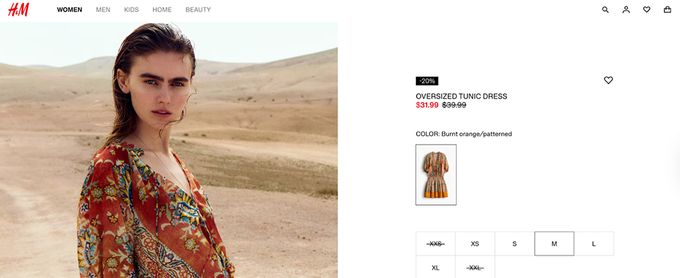

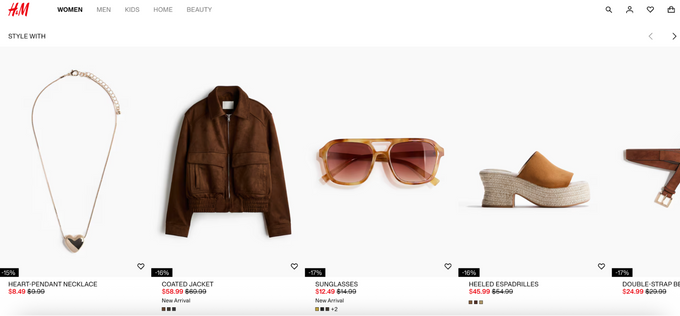

H&M puts this into practice across its product pages. As a shopper browses an item, the page dynamically updates sections like "Style With" to surface complementary pieces, outfits, and close alternatives based on what they are currently viewing. The experience adapts in real time to browsing behavior rather than serving every visitor a static, identical page.

» Ready to personalize at scale? Book a demo with CROforce

5. AI-assisted hypothesis generation

One of the more underused applications of AI in CRO is hypothesis generation. AI systems trained on behavioral data, heatmaps, session recordings, and funnel drop-off patterns can surface test ideas automatically. This reduces the time analysts spend on qualitative review and helping teams maintain a full, prioritized testing backlog.

For B2B and SaaS teams with lower traffic volumes, where each test cycle is more expensive, this is especially useful. Better hypotheses mean fewer wasted tests and a faster path to performance gains.

Erin Choice , CRO Specialist at CROforce

How to scale experiments with AI CRO

Scaling a testing program with AI is not as simple as adding a new tool. It requires a structured approach to data, prioritization, and feedback loops. Each step in this process builds on the one before it, and skipping any one of them tends to undermine the value of the others.

1. Start with clean, unified data

AI systems are only as good as the data they train on. Before introducing AI into your testing program, audit your analytics setup. Ensure events are tracked consistently, session data is complete, and conversion goals are correctly attributed. Fragmented or inconsistent tracking produces unreliable model outputs regardless of how sophisticated the AI layer is.

Deloitte's 2025 marketing research identifies data quality and integration as the top barrier to AI impact, cited by a significant share of surveyed CMOs as the primary constraint on what AI can actually deliver.

2. Define clear experiment prioritization criteria

AI can generate many hypotheses, but not all of them deserve testing. Establish a scoring framework, such as a modified ICE model that weighs impact, confidence, and ease of implementation, to evaluate which AI-generated recommendations align with current business priorities.

This prevents teams from chasing low-value tests simply because the AI flagged them. Your team still decides what matters most this quarter.

3. Use multi-armed bandit testing for high-velocity programs

Multi-armed bandit algorithms are a form of AI-assisted traffic allocation that shifts users toward better-performing variants in real time rather than waiting for a fixed test window to close. For programs running multiple experiments simultaneously, this reduces the cost of running losing variants and allows confident decisions to be made faster.

It also pairs well with always-on testing in high-value funnel stages where waiting weeks for a conventional test to complete is too slow.

4. Combine personalization with global A/B testing

AI personalization and A/B testing serve different purposes. Personalization adapts the experience for different segments without declaring a universal winner. A/B testing identifies the best overall change to make to a page or flow.

A mature program uses both in parallel: AI personalization for segment-specific optimization and A/B testing for sitewide improvements. Running them together ensures that highly individualized experiences do not drift away from a coherent brand or product narrative.

5. Build feedback loops into the program

AI CRO systems improve over time, but only if test outcomes feed back into the model. Winning tests, failed hypotheses, and post-implementation performance data should inform future recommendations. This requires consistent tagging of test outcomes and periodic model review to ensure the program continues to improve in relevance and accuracy.

What AI CRO looks like in practice

E-commerce

In e-commerce, AI CRO is most commonly applied to checkout flow optimization, product recommendation engines, and dynamic pricing displays. Predictive models identify users with high purchase intent and surface urgency cues or tailored recommendations at the right point in their session.

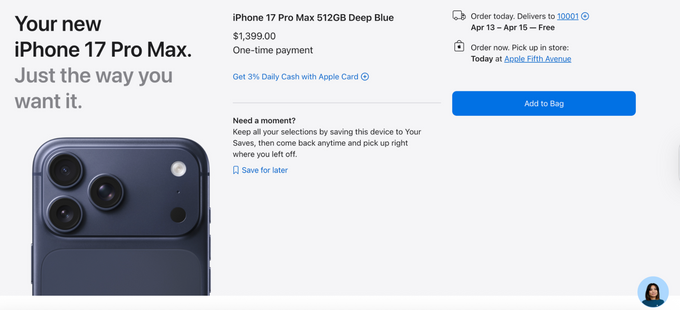

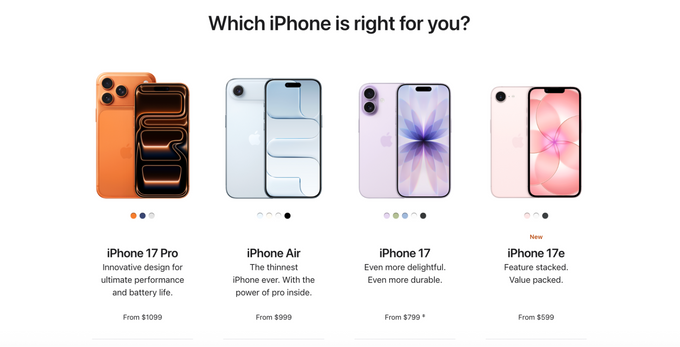

Apple's iPhone comparison and checkout pages show this in action. The "Which iPhone is right for you?" module surfaces product options matched to browsing behavior and price sensitivity, while the checkout page adapts in real time to show localized delivery windows and nearby pickup availability. Both are small, high-intent moments where AI-driven context replaces generic copy.

SaaS and B2B

In SaaS and B2B contexts, AI is frequently used on onboarding flows and pricing pages. Machine learning surfaces where different user cohorts drop off and generates hypotheses for reducing friction at each step. AI also enables account-level personalization, adapting landing page content based on company size, industry, or traffic source in real time.

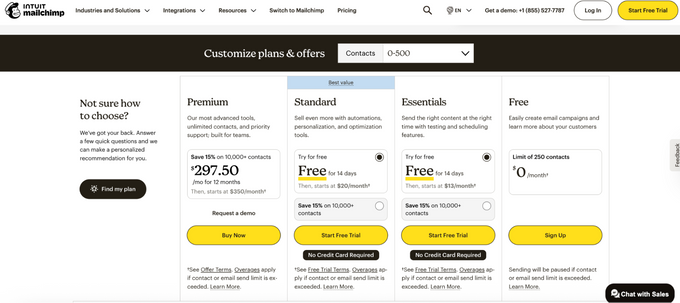

Mailchimp's industry-specific landing pages adapt headlines and messaging to the visitor's professional context, while its pricing page uses an AI-assisted "Find my plan" module to guide visitors toward the right tier based on their inputs, reducing decision friction at the moment it matters most.

Both implementations share a common characteristic: they use AI to do the analysis work that no human team could complete at the same speed or granularity, freeing CRO practitioners to focus on strategy, design, and iteration.

Limitations of AI in CRO

AI CRO is not a replacement for strategic judgment, and it comes with real constraints worth understanding before building a program around it.

- Data dependency: AI requires sufficient behavioral data to generate reliable insights. Low-traffic sites or sites with poorly implemented tracking will see limited benefit from machine learning-based analysis and may need to combine AI tools with aggregated benchmark data to compensate.

- Black-box recommendations: Some AI systems surface recommendations without fully explaining the reasoning behind them. This can make it difficult to build institutional knowledge or validate whether the right objective is being optimized. Favoring systems that expose the key drivers behind a recommendation makes the program easier to govern and learn from over time.

- Personalization without strategy: AI can serve personalized experiences, but personalization without a clear conversion strategy can fragment the user journey rather than improve it. The AI executes; the strategy still has to come from your team.

- Overfitting to historical behavior: Models trained on historical data may optimize for past patterns rather than future performance, particularly during periods of rapid change in user behavior or market conditions. Periodic retraining and human review are essential to keep models aligned with current business goals.

Teams that get the most from AI CRO are those that use it to augment an existing strategic framework rather than treating AI as a substitute for one.

Conclusion

AI conversion rate optimization gives teams the analytical capacity to run more experiments, target more specific user segments, and deliver personalized experiences at a scale that manual methods cannot support. The largest gains come not from replacing human judgment but from removing the bottlenecks that slow testing programs down, whether that is data analysis, hypothesis generation, or traffic allocation.

For teams looking to move from a reactive, low-volume testing program to a systematic, scalable one, AI CRO is where that shift begins.

» Ready to build a program that scales? Talk to a CROforce expert about what a fully managed AI CRO strategy looks like in practice.

FAQs

What types of businesses benefit most from AI CRO?

AI CRO delivers the strongest results for businesses with high traffic volumes, complex funnels, or large product catalogs where manual testing cannot keep pace. E-commerce, SaaS, and marketplace businesses are typically the best fit, though any team running a structured experimentation program can benefit.

How long does it take to see results from AI CRO?

It depends on traffic volume, data quality, and the maturity of the existing testing program. Teams with clean data and sufficient traffic can begin seeing meaningful insights within the first few weeks, with personalization and model improvements compounding over time.

Do you need a dedicated CRO team to use AI CRO?

No, but human judgment is still required for strategy, test design, and result interpretation. AI reduces the analyst hours needed for data review and hypothesis generation, which is why many teams opt for a managed service rather than building the function in-house.

How does AI CRO handle privacy and consent requirements?

AI CRO operates within your existing privacy and consent framework. Tracking needs to comply with regulations like GDPR and CCPA, and personalization should only draw on data collected with explicit user consent. Most reputable platforms include built-in consent management or integrate with existing tools.

What is the difference between AI CRO and AI personalization?

AI personalization is one application within a broader AI CRO program. Personalization adapts experiences for individual users in real time, while AI CRO covers the full optimization cycle, including analysis, hypothesis generation, testing, and measurement. A mature program uses both together.